Overview

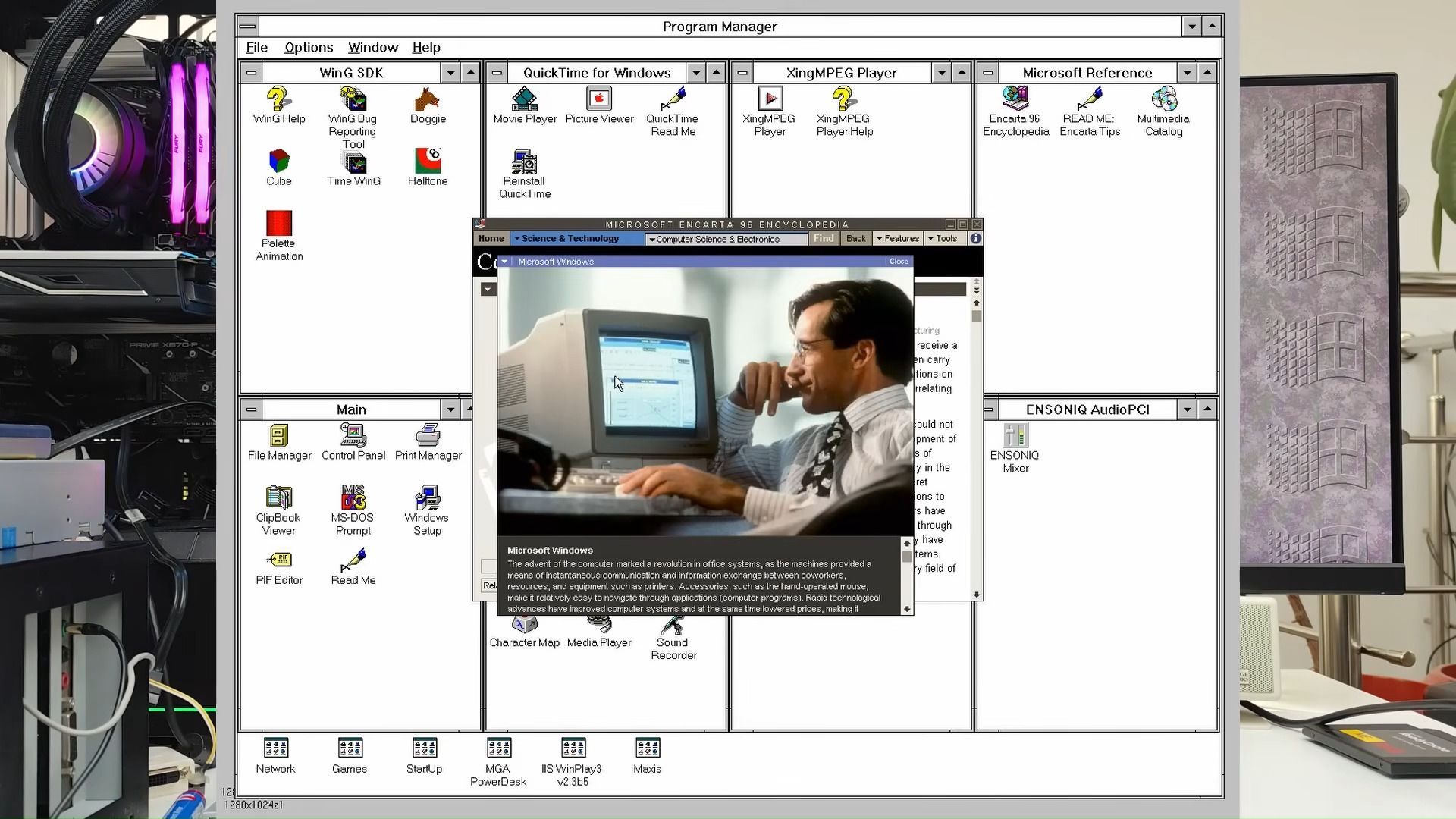

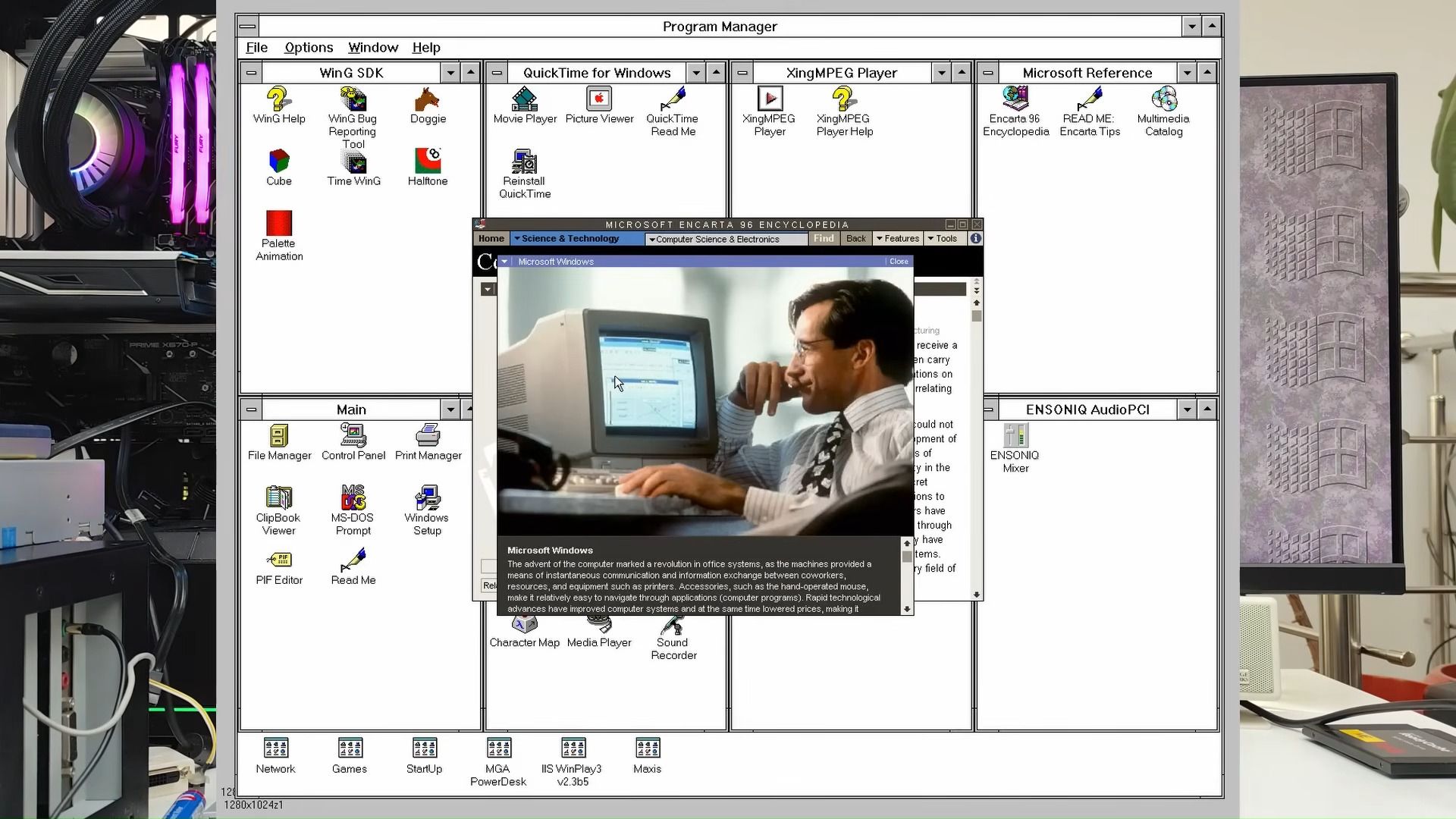

A modder installed Windows 3.1, a 1992 operating system, directly on a system running a Ryzen 9 9900X and RTX 5060 Ti, using a physical floppy disk drive. No emulation, no virtualization. The OS ran natively on 2026 hardware, bridging a 34-year gap between software and silicon.

The enabler was a legacy BIOS compatibility mode built into the Asus motherboard. That feature allowed modern hardware to correctly interpret the low-level commands of a vintage operating system. It is a rare demonstration that the foundational architecture of x86 computing remains backward-compatible across decades.

The Mechanics of Time Travel Computing

The Mechanics of Time Travel Computing

The technical complexity of running Windows 3.1X on modern architecture is immense. Modern operating systems and hardware components operate under vastly different paradigms than those of the early 1990s. The CPU architecture, memory addressing, and peripheral interfaces have undergone fundamental shifts. For instance, the initial system components were designed around limitations that would seem laughably restrictive to a contemporary engineer.

The successful installation process, which included the use of a physical floppy disk drive, confirmed that the system was interacting with the OS at a deeply foundational level. This bypasses the typical layers of abstraction found in modern virtualization, forcing the high-end Ryzen processor to execute instructions that were never intended for its core design. The fact that the RTX 5060 Ti, a GPU capable of rendering complex ray-traced scenes at high resolutions, could coexist with and support the graphical demands of a 1992 OS demonstrates an unprecedented level of backward compatibility at the firmware level.

The breakthrough hinges on the motherboard’s BIOS implementation. The 'classic BIOS' functionality acts as a sophisticated translator and gatekeeper. It must correctly initialize and present a virtualized, yet functional, environment to the OS, convincing Windows 3.1X that it is operating on the expected hardware profile, despite the presence of terabytes of modern memory and multi-core processing power. This level of architectural support moves beyond simple compatibility modes; it requires deep, low-level hardware emulation.

Analyzing the BIOS as a Compatibility Bridge

The role of the motherboard’s BIOS in this demonstration cannot be overstated. In the realm of advanced computing, the BIOS is typically viewed as a static piece of firmware, but in this instance, it functioned as a dynamic, highly specialized compatibility layer. It is the critical piece of intellectual property that allows the 2026 hardware to communicate effectively with the 1992 software stack.

Most modern hardware manufacturers optimize their BIOS for the current generation of operating systems, prioritizing stability and performance for Windows 11 or whatever the current mainstream OS is. The ability to reliably support a system that expects ISA bus communication, limited memory addressing, and specific timing protocols requires the BIOS to maintain a deep, complex library of historical hardware behaviors. This capability is a significant technical achievement, suggesting that the silicon and firmware design process is incorporating more robust, granular legacy support than previously assumed.

For enthusiasts and specialized industrial users, this capability is invaluable. It suggests that high-end modern platforms are not merely disposable cycles waiting for the next major processor leap. Instead, they retain the architectural flexibility to support niche, mission-critical legacy applications that cannot be easily ported or emulated without performance degradation. The successful run proves that the hardware remains adaptable, provided the firmware is engineered to bridge the temporal gap.

The Future of Legacy Hardware and Computing

The feasibility of this bare-metal run has broader implications for how the industry views technological obsolescence. Historically, when a new generation of hardware arrived, the supporting infrastructure for older systems often crumbled, leading to the abandonment of valuable, specialized software. This demonstration suggests a potential paradigm shift toward mandated, deep-level backward compatibility in core components like the motherboard.

From a commercial standpoint, this could open up new markets for specialized industrial computing. Many critical pieces of infrastructure—from scientific instruments to manufacturing control systems—still rely on decades-old, proprietary software. If modern hardware platforms can reliably and efficiently support these legacy systems without requiring costly, full-scale hardware overhauls, the economic barrier to maintaining critical infrastructure drops dramatically.

Furthermore, for the enthusiast community, the feat validates the pursuit of deep technical understanding. It shifts the focus from simply running old software in a VM to making it run natively, forcing a deeper engagement with the physical layer of computing. This level of hands-on engineering is a powerful counter-narrative to the often-abstracted nature of modern cloud computing, reminding the industry that the physical interaction between software and silicon remains the ultimate determinant of capability.