Overview

The standard Windows Task Manager is a foundational utility, yet its reporting on CPU utilization is frequently misleading. A former Microsoft engineer recently detailed why the tool's metrics often fail to reflect true system load, exposing a deep flaw in how the operating system reports resource consumption. The issue is not merely a minor bug; it stems from the fundamental way Windows aggregates and presents kernel-level data to user space applications.

This analysis reveals that the visible CPU percentage often represents only a fraction of the actual computational load, failing to account for critical scheduling overheads and internal system processes. The complexity lies in the separation between the reported usage and the actual time spent executing instructions across various CPU cores.

The expert introduced a unique, low-level solution that bypasses the standard API calls, providing a more accurate, real-time view of system resource allocation. This method requires a deeper understanding of Windows internals, moving far beyond the simple GUI metrics most users rely on.

The Flaw in User-Space Reporting

The Flaw in User-Space Reporting

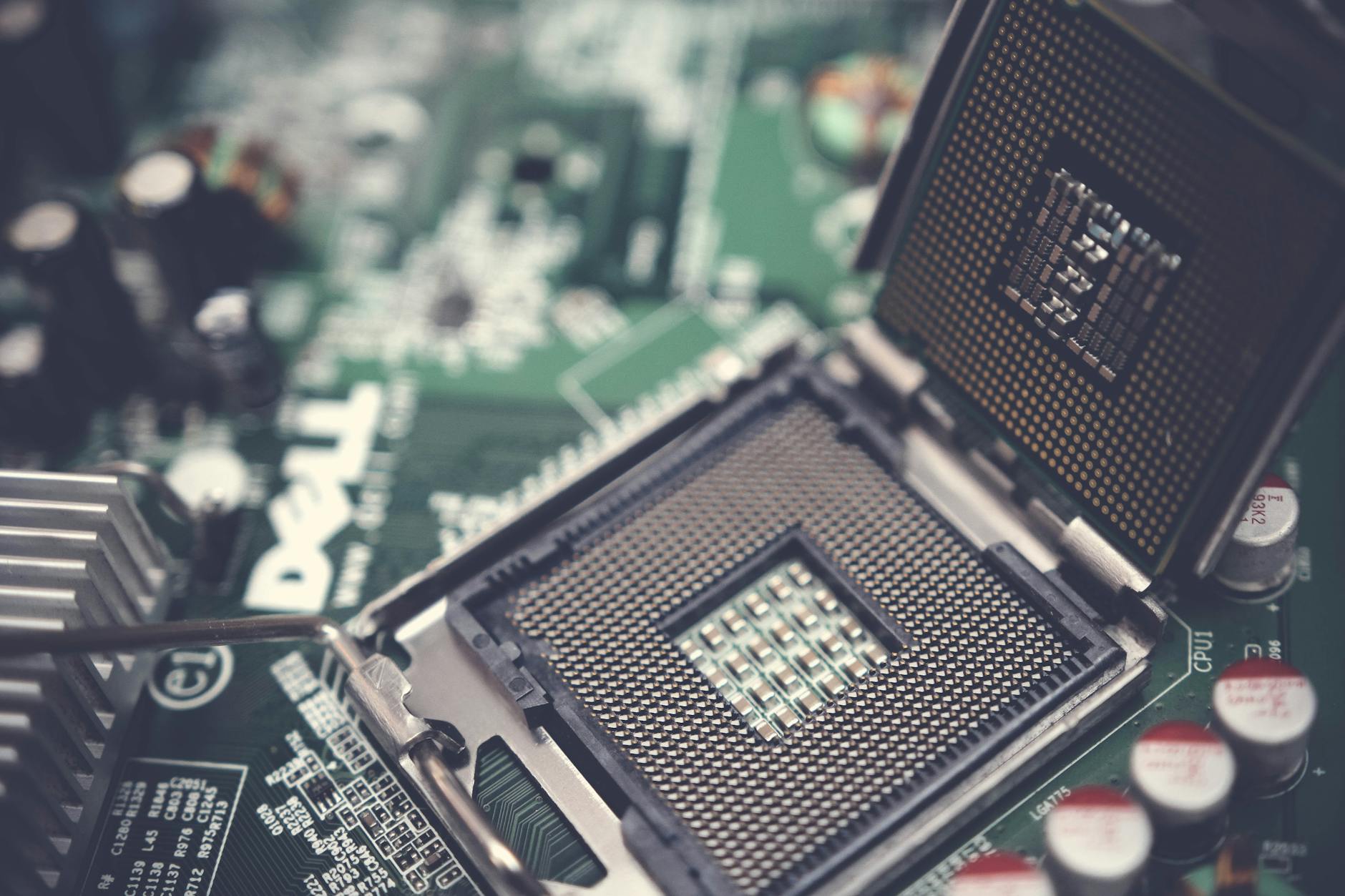

The core problem identified by the creator centers on the distinction between user-mode processes and kernel-mode operations. When a process executes, the Task Manager reports the CPU time consumed by that process. However, modern operating systems spend significant time managing the transition between user space and kernel space—a process known as context switching.

These context switches, while essential for multitasking, consume measurable CPU cycles. The standard Task Manager metrics often fail to accurately attribute this overhead. Instead, they present a sanitized view, making individual applications appear less demanding than they truly are. This omission creates a false sense of performance headroom, leading users to misdiagnose performance bottlenecks.

Furthermore, the tool struggles to differentiate between total CPU time consumed and the time spent waiting for I/O operations. A high-demand application might be limited not by its own processing power, but by slow disk reads or network latency. The Task Manager can report high CPU usage even when the bottleneck is elsewhere, leading system diagnosticians down the wrong troubleshooting path.

Deconstructing the Metrics: Kernel vs. User Load

To achieve accurate monitoring, one must look beneath the surface-level percentage. The engineer’s proposed solution involves monitoring raw performance counters directly from the operating system kernel, bypassing the standard Windows API layers that mediate the data. This approach allows for the capture of metrics that are otherwise invisible to standard diagnostic tools.

Specifically, the solution focuses on tracking the time spent in different execution states. By monitoring the kernel's internal scheduling queues, it becomes possible to quantify the overhead associated with thread management and resource arbitration. This level of detail is critical for understanding the true cost of multitasking in a modern, multi-core environment.

The unique complexity of the task is that the required data streams are not packaged neatly. They are scattered across various low-level system calls and performance counters that must be correlated in real-time. Implementing this requires writing code that interacts directly with the Windows Native API, a significant departure from typical high-level scripting or GUI development.

The Unique Solution: Bypassing the API Layer

The proposed fix involves developing a specialized monitoring agent that doesn't rely on the Task Manager’s internal data feeds. Instead, it taps into the underlying performance monitoring unit (PMU) data. This is a sophisticated technique that reads raw hardware performance counters, providing a direct measure of CPU cycles consumed, independent of the operating system's interpretation layer.

By reading these raw counters, the new tool can calculate a far more precise utilization percentage. It accounts for the cumulative overhead of context switching, interrupt handling, and kernel scheduling time, providing a holistic view of the CPU's true workload.

This bypass mechanism fundamentally changes the diagnostic capability. Instead of merely telling the user what is using the CPU, the new method explains how the CPU time is being spent—whether it's in pure computation, waiting for memory access, or managing system overhead. For advanced users and system architects, this level of granularity is invaluable for performance tuning and optimization.