Overview

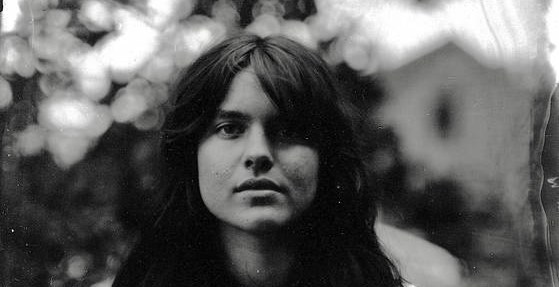

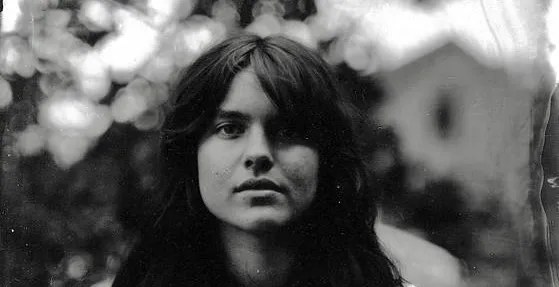

A folk musician who specializes in public domain ballads recently became a lightning rod for two distinct threats: sophisticated AI deepfakes and aggressive copyright trolling. Murphy Campbell, known for his traditional acoustic style, found his work flagged and targeted despite the fact that much of the material he performs is legally in the public domain. The incident highlights a critical vulnerability in the digital creative economy, where established intellectual property (IP) frameworks are struggling to keep pace with generative AI and automated content enforcement.

The controversy centers on the fact that even when Campbell performs songs whose copyrights have long expired, the platform’s automated content recognition systems—specifically those governing copyright claims—still flagged the material. This suggests that the enforcement mechanisms are focused on stylistic similarity or pattern recognition rather than the legal status of the underlying composition, creating a dangerous precedent for working artists.

This situation is not merely an isolated incident involving a single genre; it points to a systemic failure in how major digital platforms manage the intersection of human creativity, algorithmic detection, and outdated copyright law. The combination of AI mimicry and automated legal enforcement creates a volatile environment for independent creators.

The Failure of Automated Copyright Enforcement

The Failure of Automated Copyright Enforcement

The core conflict revealed by the Campbell case is the overreach and technical failure of automated content identification systems. When a musician performs a public domain ballad, the composition itself cannot be copyrighted. However, the performance, the specific arrangement, and the unique vocal timbre constitute derivative works that platforms attempt to manage.

The platform’s acceptance of a copyright claim, even when the source material is public domain, indicates that the detection algorithms are likely flagging patterns associated with the style or sound of the performance, rather than the actual ownership of the notes. This shifts the focus of copyright enforcement from the composition (the notes) to the execution (the performance), a murky legal area that has historically been difficult to police.

Furthermore, the existence of AI-generated deepfakes adds another layer of complexity. These fakes do not just mimic the sound; they mimic the persona. An AI can generate a convincing facsimile of a musician’s voice and style, creating content that is indistinguishable from the real thing. When combined with copyright trolling—where automated systems file claims based on tenuous or non-existent claims—the original artist is caught in a legal and technical vise.

AI Deepfakes and the Erosion of Artistic Identity

The threat posed by AI deepfakes extends far beyond simple mimicry; it fundamentally challenges the concept of artistic identity. For a folk musician whose brand is intrinsically tied to their unique voice, mannerisms, and specific performance style, the ability of AI to replicate this is a profound economic and creative threat.

These deepfakes are not simply novelty content. They can be used to generate entire bodies of work, placing the original artist in a position where their creative output can be commodified and distributed without their consent, compensation, or knowledge. This is a direct assault on the artist's ability to control their own likeness and labor.

The current legal framework, which generally requires demonstrable proof of infringement (e.g., copying a specific, protected element), is ill-equipped to handle the fluid, generative nature of AI. Deepfakes operate in a gray area: they are transformative, yet they are also derived directly from the source artist's protected performance style. The technology moves too fast for the law to establish clear lines of ownership over one's own voice or style.

The Public Domain in the Age of Generative AI

The case underscores a critical tension between the public domain and the capabilities of generative AI. The public domain is intended to be a commons—a pool of cultural material available for everyone to build upon. Historically, this meant that creators could freely use old songs and poems.

However, AI changes the calculus. While the notes of a public domain ballad are free to use, the context and performance are not. If an AI can generate a perfect, high-quality performance of that public domain material, it introduces a new form of derivative work. Does the mere act of AI-generated performance constitute a new, copyrightable work, or is it simply a mechanical reproduction of the public domain source?

The industry needs clear guidelines on how AI models are trained. If a model is trained on a specific artist’s body of work, does the resulting output carry an implied license, or does the training itself constitute a form of unauthorized use of the artist's protected performance data? Until these questions are answered, independent creators remain exposed to a volatile mix of automated claims and technological mimicry.