Overview

The rollout of Sora 2 and the associated app reveals an intensely engineered safety architecture, signaling a maturation point for generative video AI. OpenAI has built concrete protections into the core functionality, moving beyond simple content filters to embed deep, traceable provenance signals within every generated video. This approach attempts to solve the industry's most pressing challenge: distinguishing synthetic media from reality.

Every video created using Sora embeds both visible and invisible provenance signals. Crucially, all outputs carry C2PA metadata, an industry-standard signature designed to authenticate digital content. This technical layer of watermarking is complemented by internal reverse-image and audio search tools, which maintain a high degree of accuracy in tracing videos back to the Sora platform. Furthermore, many outputs include visible, dynamic watermarks that explicitly name the creator, establishing a clear chain of custody for the synthetic media.

The focus on detection is paired with a granular approach to personal identity. The system allows for sophisticated image-to-video generation using photos of family and friends, but this capability is wrapped in layers of consent requirements. This strict framework mandates user attestation of consent from all featured individuals and the legal right to upload the media, demonstrating a commitment to both creative utility and legal liability mitigation.

Provenance and Detection: Tracing the Synthetic Fingerprint

Provenance and Detection: Tracing the Synthetic Fingerprint

The technical mechanisms for proving a video's origin are arguably the most significant policy developments. By mandating C2PA metadata and internal tracing tools, OpenAI is attempting to establish a verifiable digital signature for AI content. This moves the conversation about deepfakes from a purely ethical concern to a technical, solvable problem of digital forensics.

The system’s ability to trace videos back to its source, building on successful systems from earlier iterations, suggests a centralized, verifiable ledger for synthetic media. This is critical for newsrooms, legal entities, and any industry sector that relies on visual evidence. The visible watermarks, which include the creator's name, act as a persistent, non-removable brand on the content, establishing immediate accountability.

This level of mandated provenance signals a shift in the market expectation for AI content. The assumption that any high-fidelity video is inherently real is being challenged by the platform itself, forcing downstream consumers and developers to integrate verification tools into their workflows.

Personal Likeness and Consent-Based Control

The handling of personal likeness represents a delicate balance between creative freedom and individual rights. The introduction of the "Characters" feature allows users to gain strong, controlled command over their own appearance and voice within Sora. This is not merely a profile feature; it is a sophisticated rights management system.

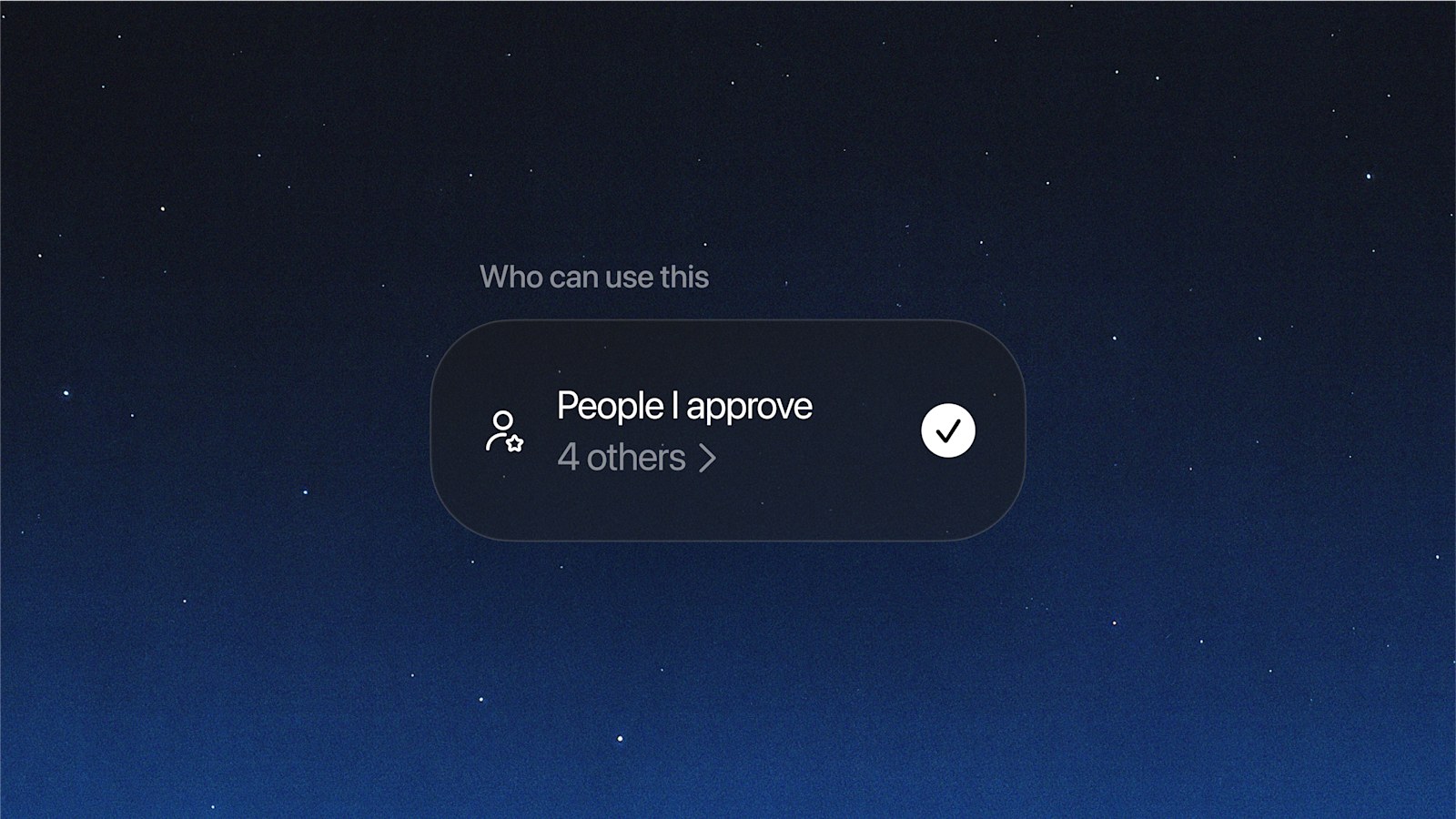

Users must operate within guardrails that ensure any audio or image likeness captured in these characters is used only with explicit, recorded consent. The platform empowers the individual to dictate who can access their character, with the ability to revoke access at any time. This granular control is paired with a transparency mechanism: any video featuring the user's character, including drafts created by other users, is always visible to the owner. This allows for proactive review, deletion, and reporting.

Even stricter guardrails are applied when characters are involved, limiting major changes to the user's appearance or placing them in compromising situations. This level of identity protection, combined with the ability to activate an even stricter usage set, moves the platform beyond simple moderation and into active, user-controlled digital identity management.

Layered Safety and Moderation Guardrails

The safety architecture is not a single filter but a multi-layered defense system designed to catch harmful content at multiple points in the creation and consumption lifecycle. This layered approach addresses risks before, during, and after generation.

At the point of creation, guardrails are designed to preemptively block unsafe content. This includes checking both the initial text prompt and the resulting video output across multiple frames and audio transcripts for prohibited material, such as sexual content, terrorist propaganda, or self-harm promotion. The red-teaming efforts confirm that the policies are continuously being stress-tested against novel risks, particularly given Sora’s increased realism and the addition of motion and audio.

Beyond the generation phase, automated systems scan all feed content against Global Usage Policies. This continuous, automated scanning filters out unsafe or age-inappropriate material. For younger users, the protections are even more pronounced. Teen accounts face specific limitations, including filtering out harmful content and implementing parental controls within the associated messaging features, ensuring that the feed remains appropriate and limiting continuous scrolling to manage usage patterns.