Navigating the immense promise and risk of AI

The speed at which AI is evolving is terrifying. One minute, we're using generative models to draft emails; the next, we're talking about systems that could manage power grids, predict market crashes, or even control autonomous vehicles. The promise is revolutionary—a leap forward that could redefine civilization. The risk, however, is equally massive.

And now, the big players are making their move.

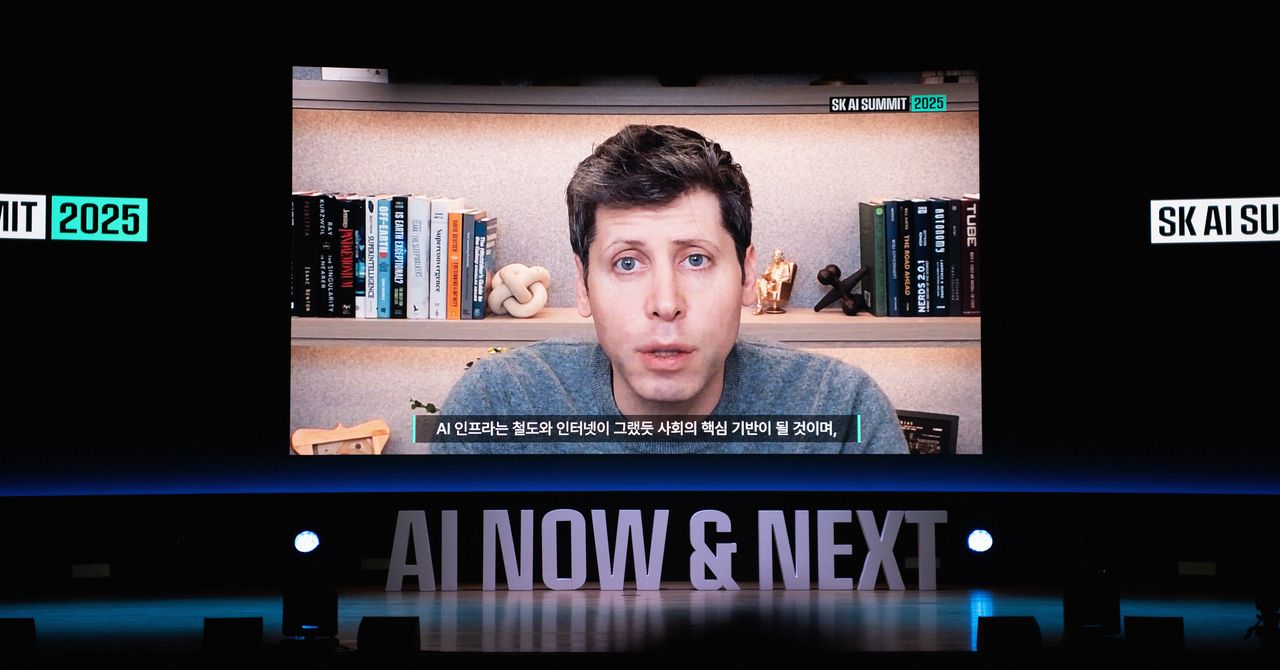

OpenAI, one of the most influential forces in the AI space, has publicly backed legislation designed to limit the liability of AI companies. On the surface, this sounds like standard lobbying. But when you look closer, this isn't just about legal paperwork; it's about fundamentally changing the risk calculus for building and deploying frontier AI models.

The Core Proposal: Limiting the Fallout

H2 Section 1

The Core Proposal: Limiting the Fallout

The legislation OpenAI is championing aims to create a legal carve-out, or a shield, for companies that develop and deploy advanced AI systems. The core concept is simple, yet profoundly impactful: if an AI system causes significant harm—whether that harm is a mass casualty event, a catastrophic financial collapse, or even just a massive data leak—the liability assigned to the developers of the model will be drastically limited.

Currently, the legal framework for AI is murky, but the general principle of product liability suggests that if a product causes harm, the manufacturer or seller can be held responsible. This is the standard playbook.

H2 Section 2

The Business Logic: De-Risking the Frontier

Why would OpenAI, and other major AI labs, push for this kind of legislation? The answer is purely economic: de-risking.

Developing frontier AI models is astronomically expensive. These labs require billions of dollars in compute power, specialized talent, and massive datasets. They operate in a high-stakes environment where the potential rewards are limitless, but the potential liabilities—both legal and ethical—are equally immense.