Overview

Nvidia has released the Physical AI Data Factory Blueprint, an open reference architecture for generating, augmenting, and evaluating the training data that physical AI systems need to function in the real world. Robots, autonomous vehicles, and industrial vision systems all require training data that is expensive and sometimes impossible to collect at the required scale. The Blueprint provides a standardized pipeline for generating that data synthetically.

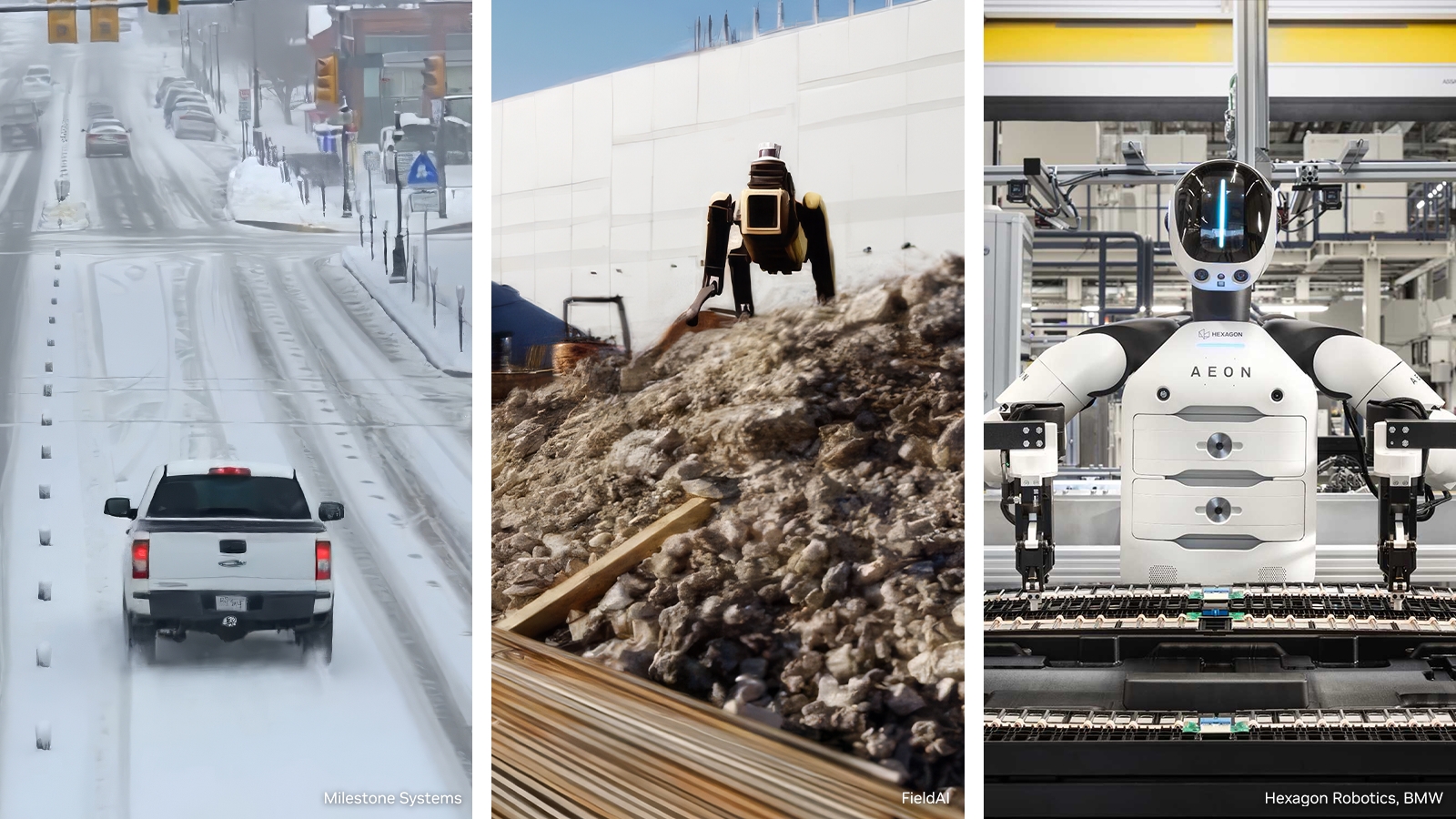

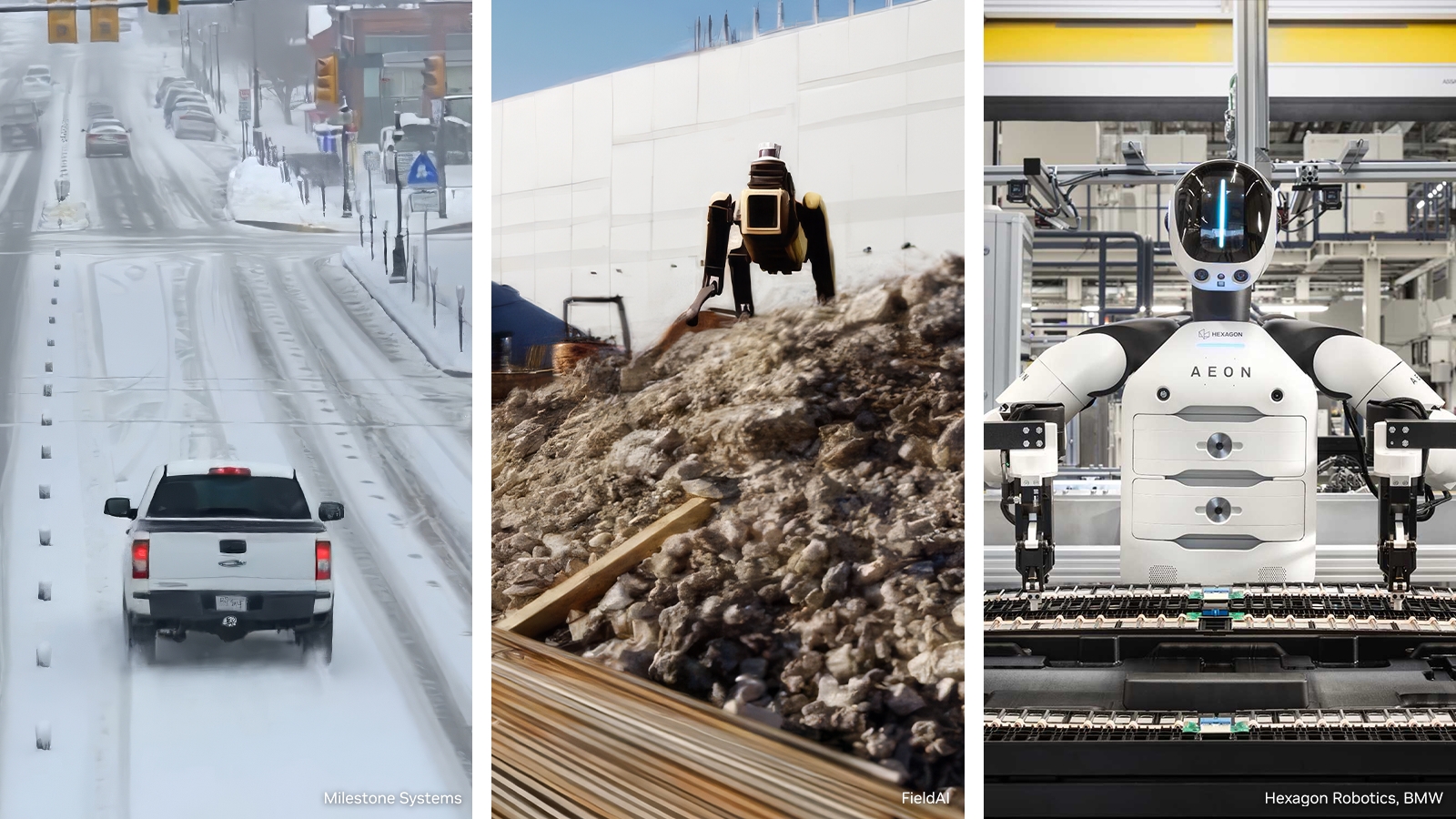

FieldAI, Hexagon, Uber, and Teradyne are already using the Blueprint in production systems. The early adoption by a logistics company, a precision measurement firm, a field robotics startup, and a manufacturing automation leader covers most of the major physical AI application domains. Nvidia is not releasing a research paper. It is releasing infrastructure that companies are actively using.

Open reference architecture for generating and evaluating training data

The Training Data Problem

Physical AI systems need training data that reflects the full distribution of conditions they will encounter in deployment. An autonomous vehicle cannot be trained only on clear daytime highway footage. It needs rain, fog, night driving, construction zones, unusual road markings, and edge cases that occur infrequently in real collection but frequently enough in deployment to matter. Collecting all of this on real roads is slow, expensive, and in some cases requires deliberately creating dangerous situations.

The same problem applies to industrial robots. A robot arm that handles fragile components needs to have encountered thousands of drop scenarios, surface texture variations, and lighting conditions before it touches anything in production. You cannot run those experiments on the actual product without destroying it. You cannot collect that data from a real production line without interrupting operations.

Synthetic data is the obvious solution, and the industry has known this for years. The execution problem is that synthetic data pipelines have been fragmented, expensive to build from scratch, and inconsistent in quality across organizations. Each company reinvents the same infrastructure. The Blueprint provides a reusable foundation so teams can focus on the domain-specific content of their training data rather than the pipeline mechanics.

What the Blueprint Provides

The Blueprint unifies three pipeline stages that are typically handled by separate, poorly integrated tools. Generation creates synthetic scenarios using physics simulation and rendering. Augmentation applies transformations to increase variety: lighting changes, weather effects, sensor noise models, and domain randomization techniques. Evaluation runs automated quality checks to filter out synthetic data that is too unrealistic to transfer to real-world performance.

The open licensing means companies can use the Blueprint without a vendor relationship with Nvidia, though the compute for running large-scale synthetic data generation at training speed will, by design, run most efficiently on Nvidia infrastructure. The Omniverse platform, which powers the simulation layer, is deeply optimized for Nvidia GPUs. This is the same structural dynamic as CUDA: free to adopt, expensive to run without Nvidia hardware.

The Blueprint also integrates with Nvidia's Isaac robotics platform and Cosmos world foundation models, creating a vertically integrated stack for physical AI development. The integration is optional but convenient. Companies already using Isaac for robot control can extend their existing Nvidia investment into the data generation layer without adopting entirely new tooling.

Why Synthetic Data Quality Is the Real Bottleneck

Generating synthetic data is not hard. Rendering photorealistic scenes, simulating physics, and creating labeled datasets has been technically feasible for years. The hard part is sim-to-real transfer: the gap between performance on synthetic training data and performance in real-world deployment. Models trained on synthetic data often fail in deployment in ways that are hard to predict because the synthetic distribution does not accurately capture the real-world distribution.

The gap shows up in the details that are easy to get wrong in simulation. Lighting in the real world bounces off surfaces in ways that renderers approximate but do not perfectly replicate. Objects have surface textures that vary with age, wear, and environmental exposure. Sensor noise models for cameras and lidar are statistical approximations of hardware behavior that changes across individual units and environmental conditions. Each of these gaps is small in isolation. Together, they add up to a model that works well in simulation and fails in edge cases in deployment.

The Blueprint addresses this through its augmentation and evaluation stages. Augmentation applies domain randomization: systematic variation in the parameters that sim-to-real gaps tend to cluster around. Evaluation uses held-out real-world test sets to measure how well synthetic data transfers before you commit to a full training run. This closed-loop approach to synthetic data quality is what separates production-grade pipelines from research prototypes, and it is the part that most organizations get wrong when they build their own solutions.

The Companies Already Using It and What They're Building

FieldAI uses the Blueprint for field robotics, systems that operate in unstructured outdoor environments: construction sites, agricultural fields, disaster response scenarios. These environments are among the hardest for physical AI because they are unpredictable by definition. Generating synthetic training data for a construction site requires modeling terrain variation, dynamic obstacles, weather across seasons, and equipment configurations that change day to day. The Blueprint provides the pipeline; FieldAI provides the domain knowledge about what conditions matter.

Hexagon applies it to industrial precision measurement, where robotic systems must achieve millimeter or sub-millimeter accuracy in environments with vibration, thermal expansion, and variable lighting. The data requirements are different from field robotics: less variety in environment, much higher precision requirements for the training data. Hexagon uses the augmentation stage to model the specific sensor characteristics of their measurement hardware rather than generic camera models.

Uber and Teradyne represent the autonomous logistics and manufacturing automation ends of the spectrum. Uber's autonomous vehicle work requires synthetic data across a massive range of road conditions, traffic scenarios, and edge cases that real collection cannot efficiently cover. Teradyne's manufacturing robots need training data that reflects the specific assembly tasks, component tolerances, and production line configurations of their customers. Both companies benefit from the evaluation framework more than the generation stage, using it to validate that their existing synthetic data pipelines actually transfer to real-world performance before each model deployment.