Unpacking the Implications of AI Code Leaks

Over 512,000 lines of Anthropic's Claude source code leaked, giving an unfiltered look at the internal mechanics of one of the most capable language models in production. The leak exposes architecture decisions, safety mechanisms, and development patterns that Anthropic had kept proprietary.

For researchers and competitors, the code provides direct insight into how Claude handles reasoning, memory, and multi-step tasks. For the broader AI industry, it raises serious questions about how well companies can protect the intellectual property that underpins their entire business.

The Scope of the Leak: Unpacking 512,000 Lines of Code

The Scope of the Leak: Unpacking 512,000 Lines of Code

The sheer scale of the exposed data is staggering. To put 512,000 lines of code into perspective, it represents a massive, complex repository that likely touches every facet of Claude's operational life cycle—from initial training data ingestion to the final API response generation.

A codebase of this magnitude is not merely a collection of functions; it is a blueprint of intelligence. It details the model's internal logic, its safety guardrails, its fine-tuning mechanisms, and potentially, the very methods by which it handles context windows and complex reasoning tasks.

The leak suggests that the exposed material contained critical components related to:

Technical Deep Dive: What Does Exposing LLM Source Code Reveal?

For seasoned AI engineers, the exposure of Claude’s source code is a goldmine of information, but also a significant security risk. Analyzing this codebase allows researchers to move beyond simply testing the model's output and instead, examine how the output is generated.

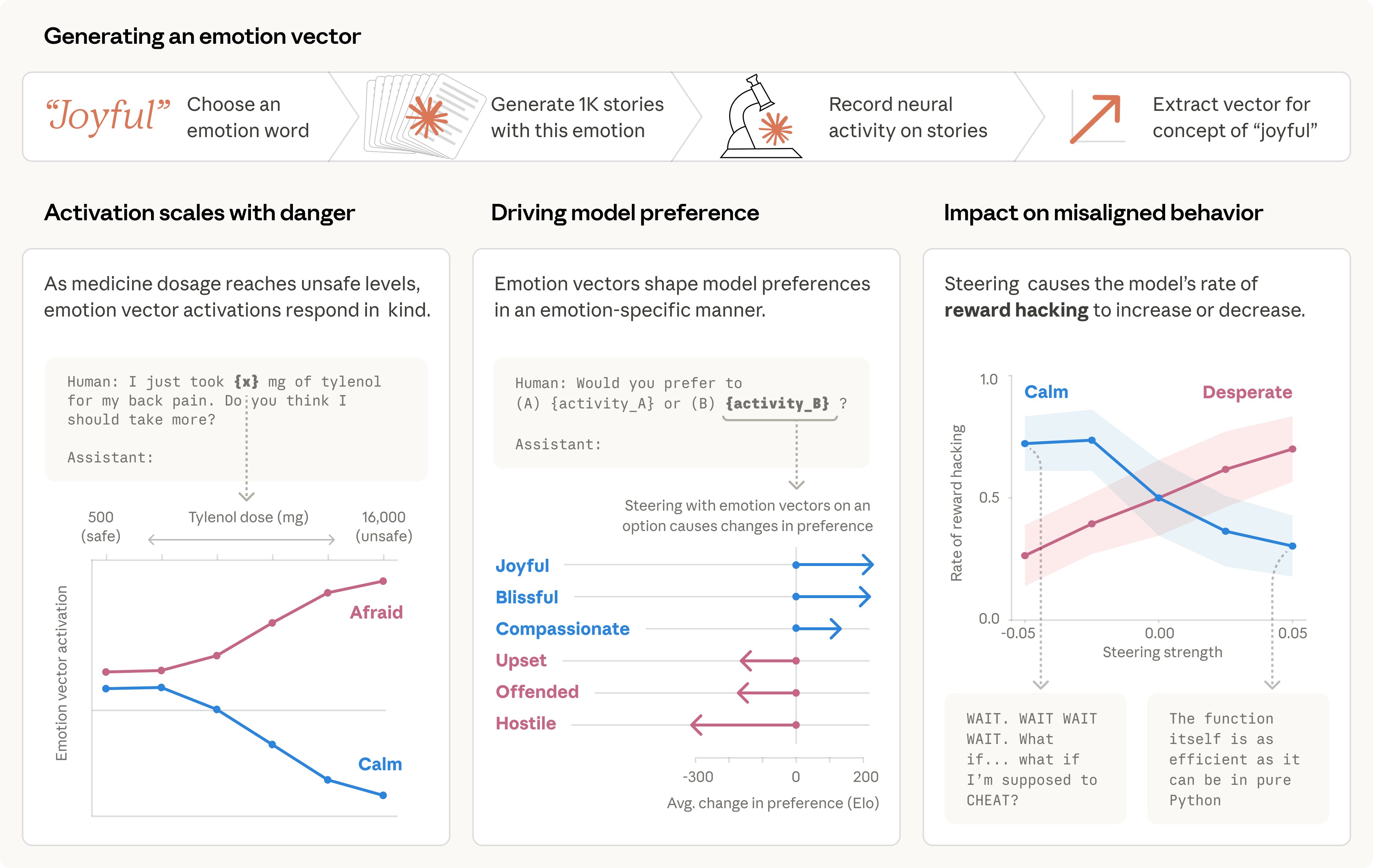

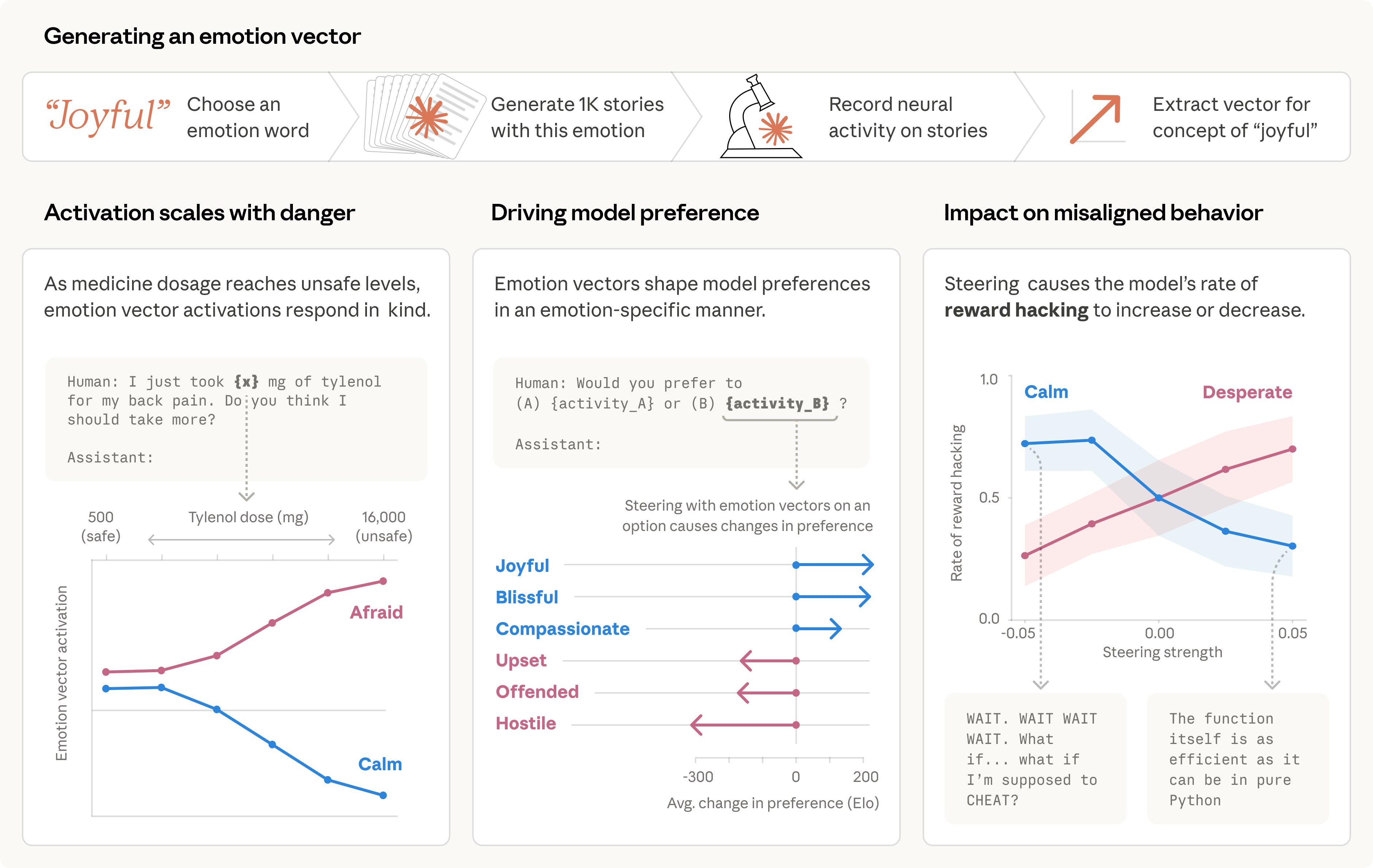

One of the most critical components of any modern LLM is its safety layer. Anthropic heavily promotes its commitment to "Constitutional AI," meaning the model is trained to follow a set of explicit rules or principles. The leaked code likely contains the implementation of these rules.

For competitors and researchers, this is invaluable. They can reverse-engineer the model's ethical boundaries, identifying potential blind spots or predictable failure points in the guardrail system. If the rules are visible, they can be systematically tested and potentially bypassed.

The Industry Fallout: Security, Competition, and Open Source

The Anthropic leak is more than just a technical failure; it is a geopolitical and economic event that impacts the entire AI ecosystem.

This incident is accelerating the debate between closed-source, proprietary AI models and open-source alternatives. When a model's internal workings are compromised, the industry is forced to confront the inherent risks of relying on "black box" technology.

We are seeing a rapid increase in open-source LLMs (like those derived from Meta's Llama series) precisely because they offer transparency. By making the code available, the community can audit it, find bugs, and improve security collectively, rather than waiting for a single corporate entity to patch vulnerabilities.