Overview

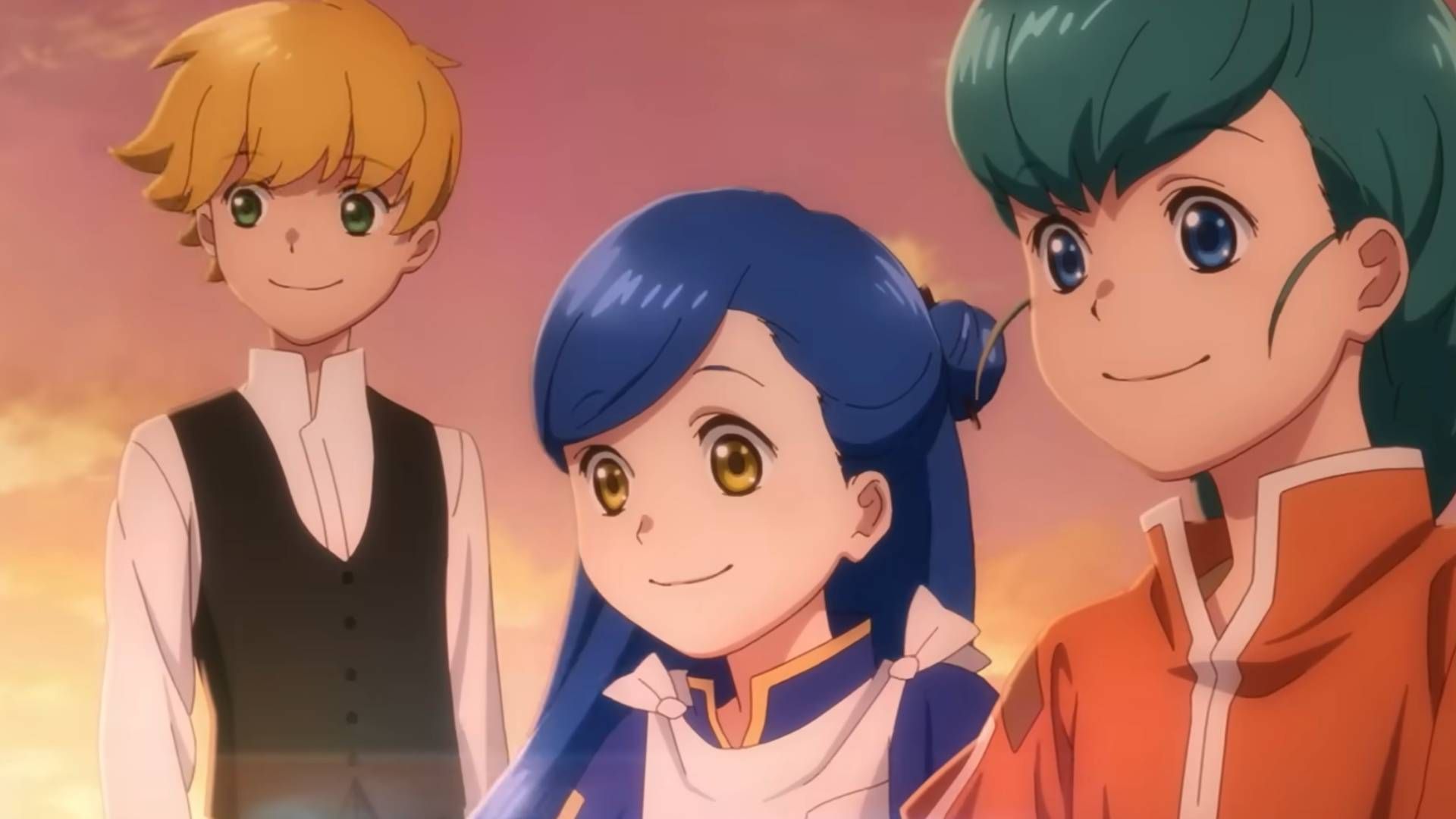

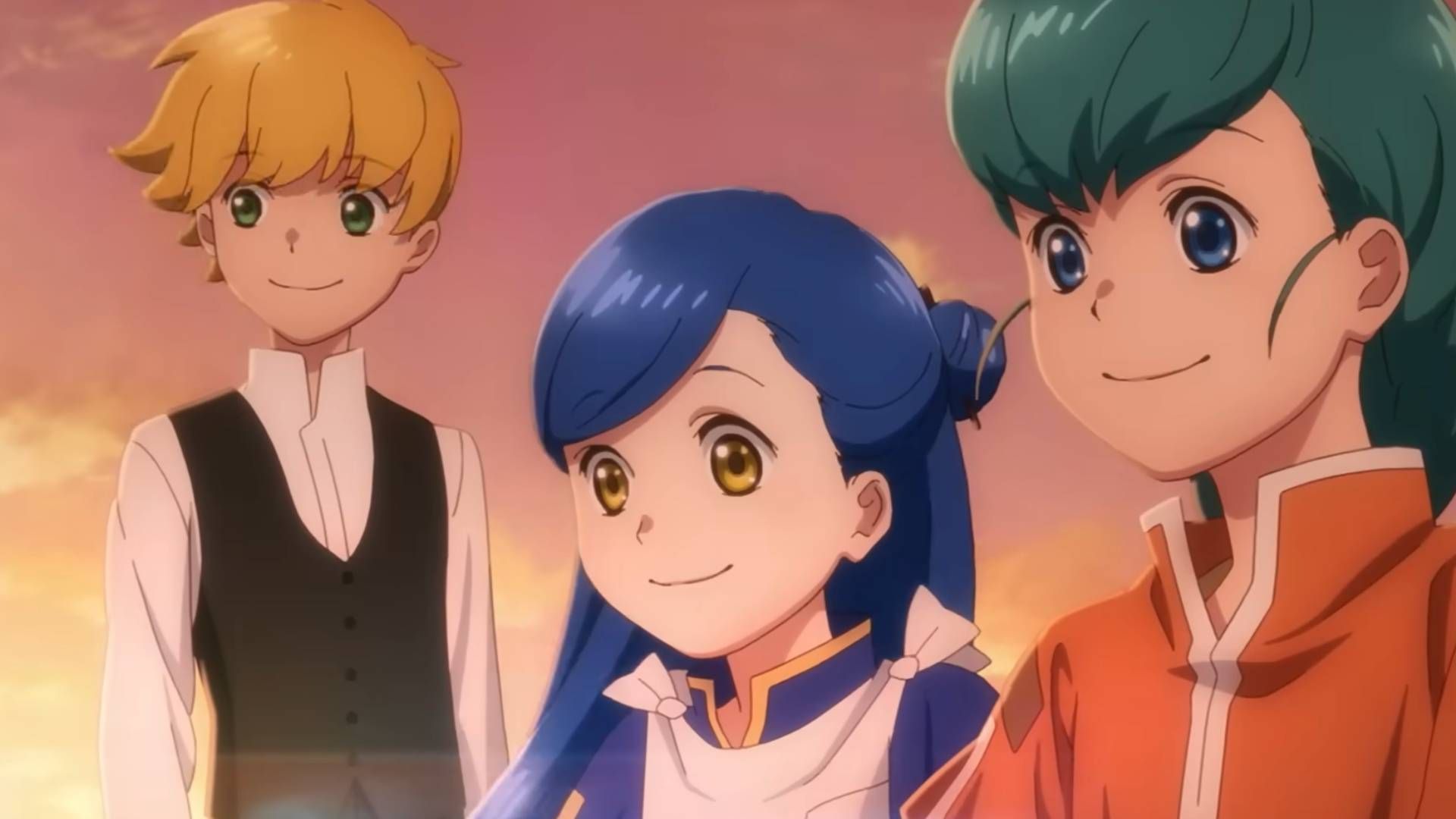

The studio responsible for the Ascendance of a Bookworm anime has pulled a significant creative element, replacing its opening credits following controversy surrounding the use of generative AI. This swift, visible retraction marks one of the most high-profile instances of an animation house publicly adjusting its creative process in response to ethical and industry scrutiny. The move suggests that the line between technological efficiency and artistic integrity remains a volatile and poorly defined boundary in modern media production.

The incident draws attention to the rapidly escalating debate over AI's role in creative industries, particularly animation, which relies heavily on highly skilled, specialized labor. While generative AI tools promise unprecedented speed and cost reduction, their integration raises fundamental questions about authorship, compensation, and the very definition of human artistry. The studio's decision, while appearing to be a simple PR fix, is a potent signal about the market's current tolerance for AI-assisted content.

This development serves as a critical case study for other animation houses and content creators who are currently navigating the choppy waters of technological adoption. The industry is moving past the initial novelty phase of AI tools and entering a period of forced accountability, where ethical guidelines and labor protections are becoming non-negotiable requirements for continued operation.

The Mechanics of Creative Retraction

The Mechanics of Creative Retraction

The core issue centered on the use of generative AI in creating the opening sequence, a process that, while technologically novel, triggered immediate backlash from both industry critics and the general public. The controversy was not merely about the existence of AI tools, but the manner of their deployment—specifically, whether the AI output was presented as fully human-directed or merely as a cost-saving gimmick.

In the high-stakes world of anime production, opening credits are not merely decorative; they are integral pieces of marketing and mood-setting that define the show's tone for the viewer. Replacing these credits represents a significant logistical undertaking, requiring not only new creative assets but also the coordination of new voice talent, musical scores, and animation teams. The studio’s ability to execute such a rapid, high-quality replacement speaks to its deep operational reserves, but the necessity of the change speaks volumes about the external pressure it faced.

The studio’s apology, preceding the credit replacement, was a calculated maneuver designed to manage public perception. By acknowledging the controversy and taking immediate, visible corrective action, the house attempted to pivot the narrative from "AI misuse" to "studio commitment to quality." However, industry observers view such PR moves with skepticism, recognizing that the underlying tension between technological capability and artistic authenticity has not been resolved by a mere apology.

AI Ethics and the Labor Market

The Ascendance of a Bookworm incident is symptomatic of a broader systemic crisis facing creative labor. Generative AI models, while powerful, are fundamentally trained on vast datasets of existing human-created work—the very work of artists, animators, and writers. The debate, therefore, quickly shifts from "Can AI create art?" to "Who owns the art that AI is trained on, and who gets paid for it?"

For the animation sector, the threat is particularly acute. Animation is a highly specialized, labor-intensive field where years of training are required to master techniques like character rigging, in-betweening, and complex visual effects. If AI can generate passable, high-volume assets cheaply, the economic value of these specialized human skills diminishes rapidly. The studio's retraction, while a concession, does not solve the fundamental economic vulnerability of the workforce.

Furthermore, the controversy forces a necessary, albeit painful, conversation about transparency. Viewers and critics are increasingly demanding to know the extent of human involvement in every piece of media they consume. The opacity surrounding AI usage—where the line between human prompting and machine execution is blurred—is what fuels mistrust. The industry is being forced to develop a new standard of disclosure, moving beyond simple disclaimers to verifiable documentation of creative input.

Setting a Precedent for Future Productions

This episode establishes a critical precedent for how major studios will handle AI integration moving forward. The lesson for the industry is clear: technological adoption must be paired with impeccable public relations and, more importantly, a demonstrable commitment to human creative input. Simply having access to a powerful tool is insufficient; the narrative of human craftsmanship must remain paramount.

For studios planning future projects, the Ascendance of a Bookworm case serves as a warning shot. Any perceived attempt to use AI as a shortcut around established creative processes will be met with immediate, organized resistance. This resistance is not limited to ethical purists; it includes the core audience who value the unique, imperfect, and deeply human touch that defines great art.

The market is signaling a preference for "human-first" content. This means that the most successful and sustainable integration of AI will not be the one that uses the most advanced algorithms, but the one that uses AI to augment human talent without replacing the human signature. The future of animation, therefore, hinges on establishing clear, ethical boundaries where AI acts as a sophisticated assistant, not a primary author.