Overview

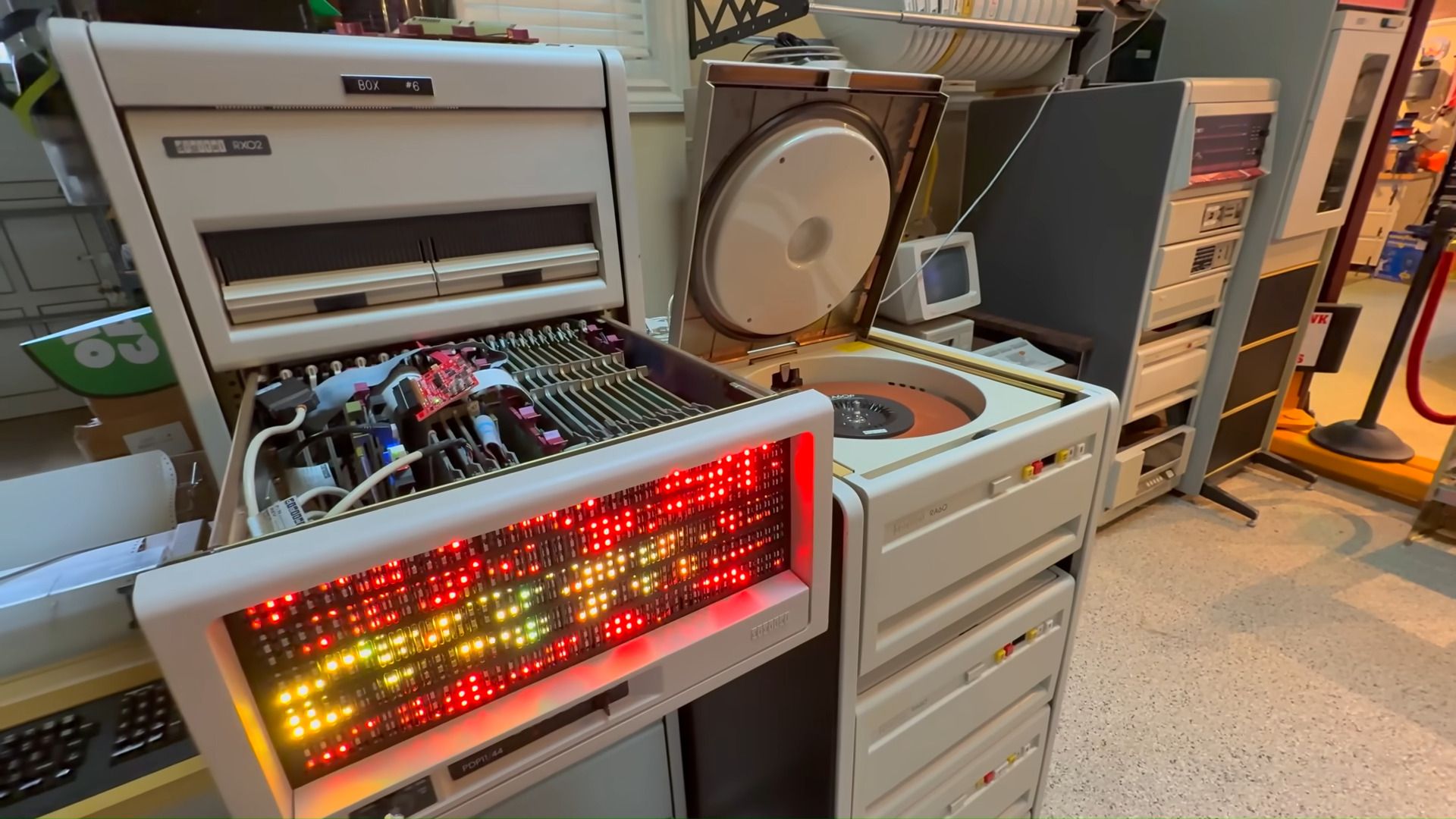

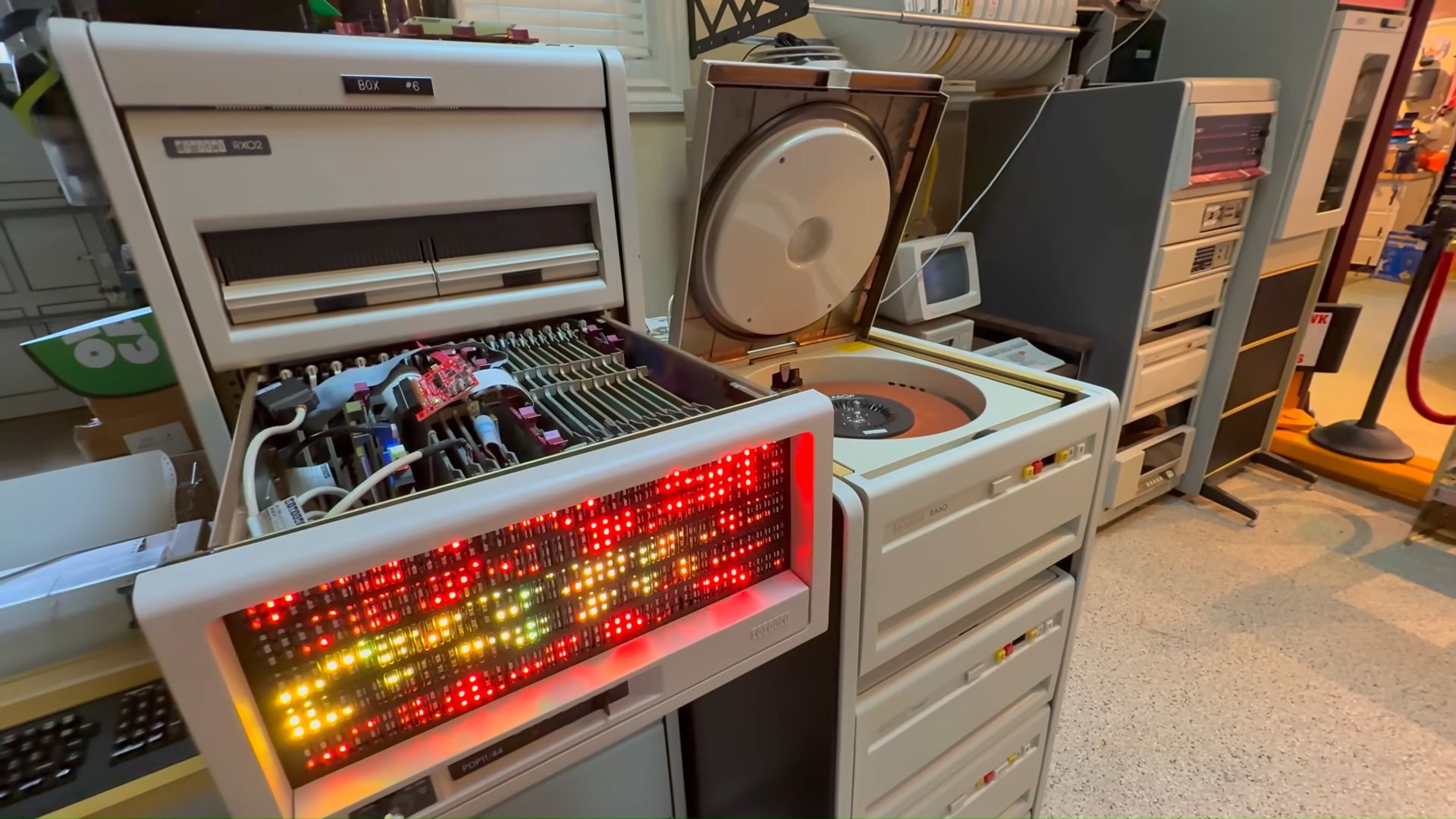

A veteran developer has achieved a computational feat that defies modern expectations: running a complex transformer AI model on a 47-year-old PDP-11 minicomputer. The system, limited to a 6 MHz CPU and a mere 64KB of RAM, executes the sophisticated AI task using a model written entirely in PDP-11 assembly language. This project is not merely an academic curiosity; it represents a deep dive into the fundamental limits and enduring capabilities of computing architecture.

The achievement requires more than just historical hardware; it demands a mastery of low-level programming that bypasses decades of abstraction layers. Transformer models, the backbone of modern large language models (LLMs), are typically associated with massive GPU clusters and petabytes of memory. To successfully port this capability to a machine designed for a different era of computing highlights the developer’s profound understanding of both AI principles and vintage machine architecture.

This demonstration challenges the prevailing narrative that advanced AI computation is solely dependent on the latest silicon. Instead, it forces a re-examination of how computational efficiency and algorithmic design can decouple performance from raw hardware power. The resulting system is described by observers as "gloriously absurd," yet its technical complexity solidifies it as a significant benchmark for constrained computing.

The Technical Feat of Assembly-Level AI Implementation

The Technical Feat of Assembly-Level AI Implementation

The core technical challenge lies in the implementation of the transformer model itself. Modern AI frameworks rely heavily on high-level languages and optimized linear algebra libraries that abstract away the complexities of the underlying CPU. Running such a model on the PDP-11 requires the developer to manually translate every mathematical operation—every matrix multiplication, every attention mechanism—into raw PDP-11 assembly language.

This process involves meticulous resource management, particularly concerning the 64KB RAM limitation. Every byte must be accounted for, forcing the developer to optimize the model's weights and operational flow down to the bit level. In modern computing, memory access is often the bottleneck; on the PDP-11, memory is the defining constraint. The developer must structure the entire model to fit within this minuscule address space while maintaining the computational integrity required for a functional transformer.

The choice of the PDP-11 is deliberate, selecting a platform that was foundational to early computing but has been largely superseded by RISC and CISC architectures. The success of the project proves that the algorithmic complexity of the transformer model is not inherently tied to the clock speed or memory capacity of the host machine, but rather to the efficiency of the code written for that specific instruction set. It is a testament to the power of algorithmic optimization over brute-force scaling.

Implications for Edge Computing and Resource Constraints

The successful deployment of AI on such antique hardware has immediate implications for the field of edge computing. Edge devices—such as IoT sensors, embedded systems, and microcontrollers—are increasingly constrained by power, size, and memory. These devices cannot afford the computational overhead of cloud-based LLMs.

The PDP-11 project serves as a powerful proof-of-concept for extreme model quantization and optimization. When resources are severely limited, the focus shifts from building larger models to making existing models run smaller and faster. This mirrors real-world challenges in deploying AI in remote medical devices, autonomous vehicles, or industrial monitoring systems where connectivity and power are unreliable.

Furthermore, the project emphasizes the critical role of domain-specific compilers and specialized toolchains. Developing an AI model for a modern GPU is one problem; developing it for a 6 MHz, 64KB CPU is another entirely. The necessary toolchain must account for the specific registers, addressing modes, and instruction set quirks of the PDP-11, creating a highly specialized pathway for computational deployment that bypasses standard modern ML pipelines.

Reassessing the AI Hardware Dependency Cycle

This demonstration forces a critical discussion regarding the assumed linear relationship between hardware advancement and AI capability. The industry often operates under the assumption that the next breakthrough requires the next generation of silicon—more cores, more transistors, more memory bandwidth. The PDP-11 project challenges this assumption by proving that the intelligence resides in the code, not just the copper.

The ability to run a transformer model on this vintage machine suggests that many perceived computational bottlenecks are, in fact, problems of software optimization and algorithmic pruning. Instead of chasing the next teraflop rating, research efforts could pivot toward developing generalized methods for model compression and efficient execution across vastly heterogeneous and resource-starved architectures.

From a historical perspective, the project also serves as a powerful educational tool. It provides a tangible, working example of how fundamental computing principles—data structures, memory addressing, and algorithmic flow—were established, allowing modern engineers to appreciate the foundational layers upon which today's complex AI stacks are built. It is a reminder that the history of computing is a continuous cycle of constraint leading to innovation.