Overview

Researchers have unveiled LPM 1.0, an AI model capable of generating continuous, real-time video of a speaking or singing character using only a single source photograph. This development represents a significant technical leap in synthetic media, moving beyond short, pre-rendered clips to stable, extended-duration video generation. The model processes complex inputs—text, audio, and reference images—simultaneously to produce highly detailed, lip-synchronized speech alongside subtle, natural facial expressions.

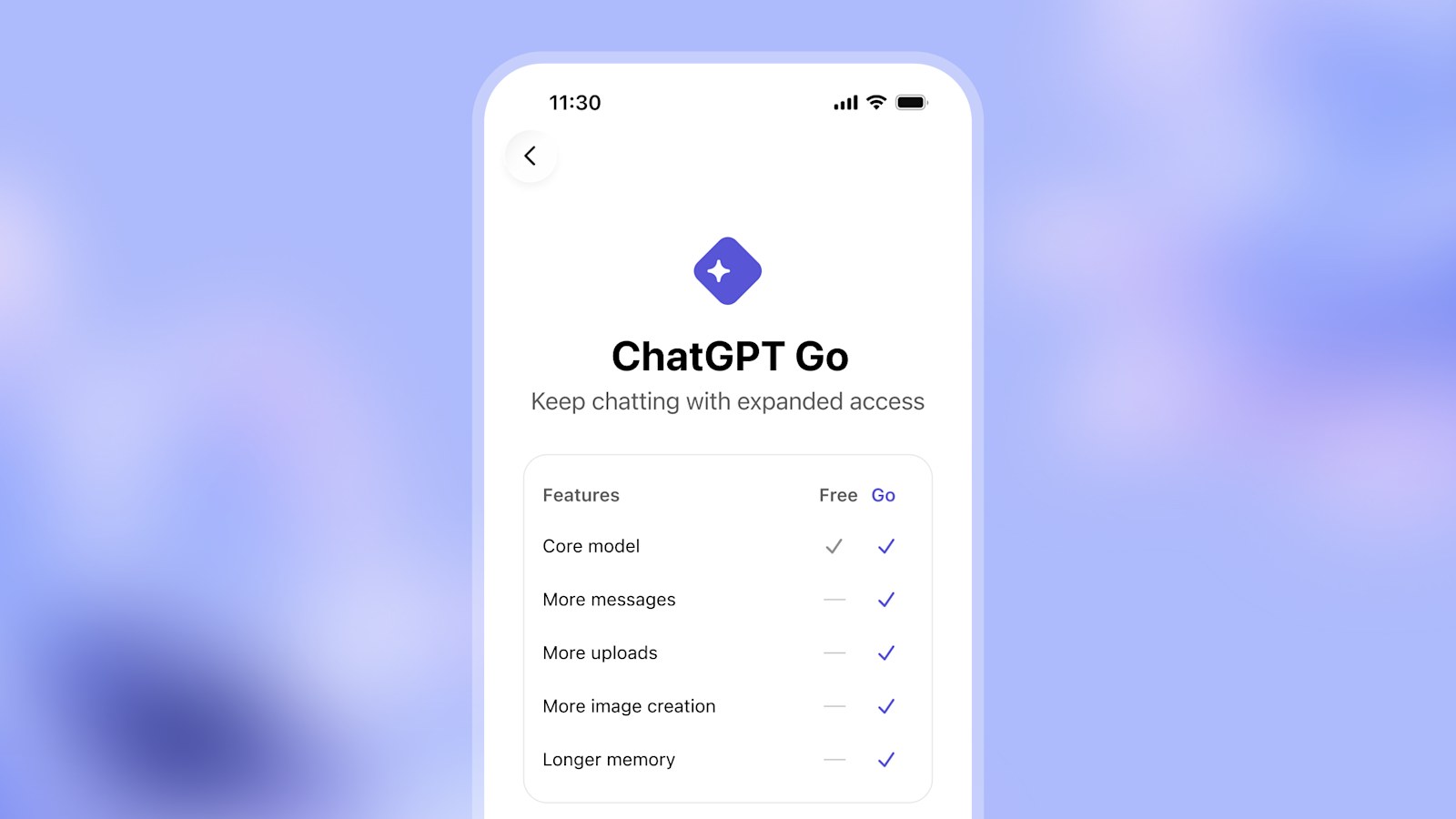

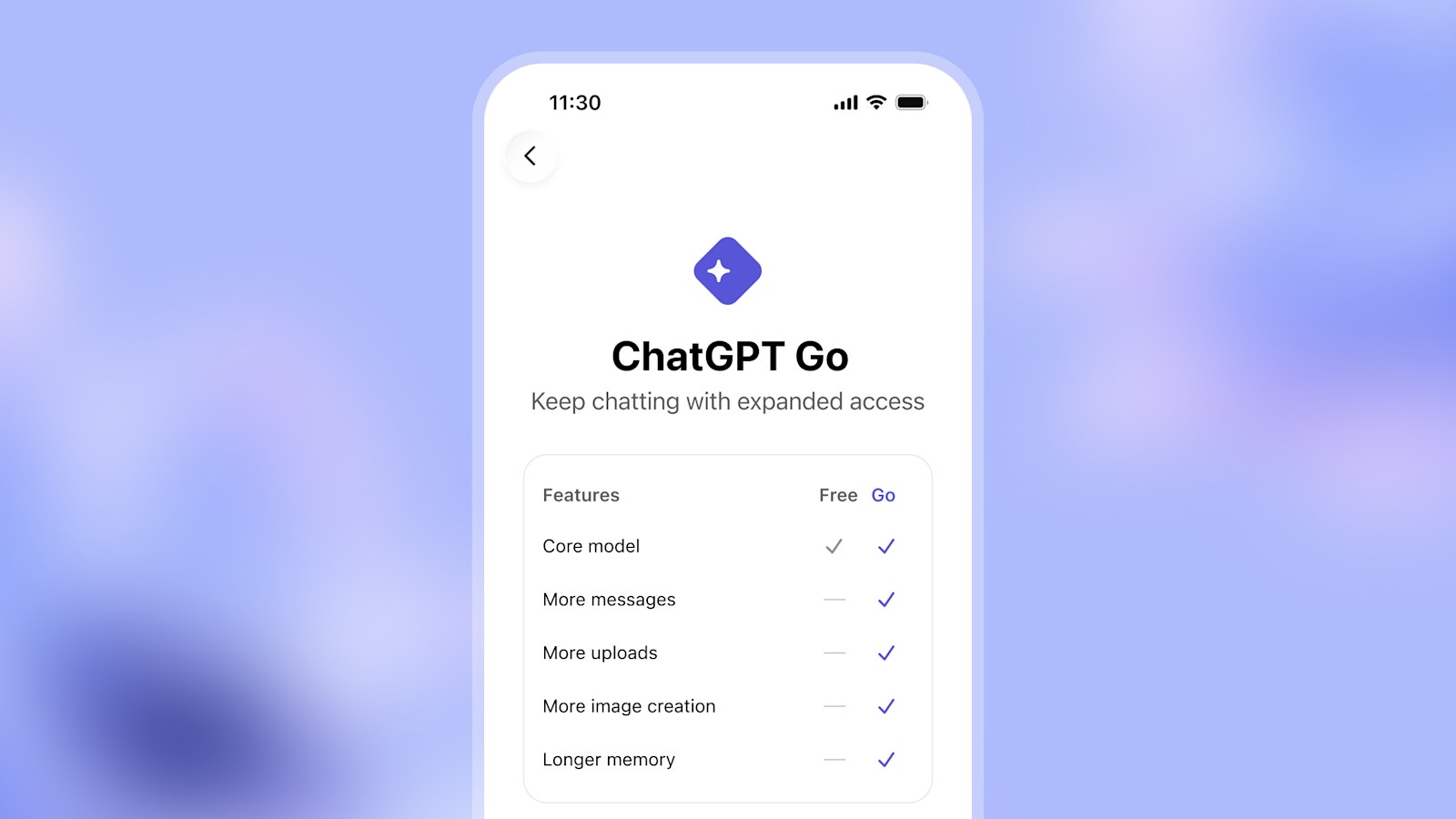

The system’s ability to maintain coherence over extended periods is particularly notable. LPM 1.0 is reported to sustain stable video generation for runs up to 45 minutes. Furthermore, the technology is designed to integrate directly into existing voice AI platforms, such as those used by ChatGPT, effectively turning a static image into a dynamic, visual conversational partner.

This capability means that AI can now create visual dialogues that mimic human interaction across diverse styles, including photorealistic human faces, anime characters, and 3D game assets, all without requiring extensive additional training on the specific character. The underlying mechanics point toward a future where visual communication is decoupled from physical presence.

Multi-Granularity Conditioning and Conversational States

Multi-Granularity Conditioning and Conversational States

The technical core of LPM 1.0 lies in what the researchers term "multi-granularity identity conditioning." Instead of relying solely on the initial image, the model incorporates reference material from various angles and with different emotional expressions. This mechanism allows the AI to accurately synthesize complex human details—such as specific wrinkles tied to emotion, teeth visibility, or profile views—by pulling them directly from the provided reference data.

This detailed conditioning allows the AI to recognize and simulate multiple conversational states. When the character is listening, the model generates reactive cues, including natural head nods or shifts in gaze, based on the incoming audio stream. When the character is speaking, the generated audio dictates the precise lip movements and body language. Crucially, during periods of silence or natural pause, LPM generates believable "idle behavior" based on textual instructions, preventing the uncanny valley effect of simply freezing or glitching.

Beyond live interaction, the model also supports offline video generation from existing audio files. This feature provides utility for content creators, allowing them to animate podcasts or movie dialogue without needing a live chat interface. While the current version does not include video-based input control, the framework suggests future scalability into more complex, multi-modal input systems.

The Infrastructure of Synthetic Identity

The implications of LPM 1.0 extend far beyond simple novelty video generation. The model establishes a new infrastructure for synthetic identity, suggesting a shift in how AI systems are expected to interact with users. The goal is clearly to move beyond text-only or voice-only communication, creating visually believable characters that exhibit emotional depth and eye contact.

For industries ranging from education and virtual companions to customer service and gaming, the ability to deploy a persistent, animated, and highly responsive AI avatar is a paradigm shift. Imagine educational modules where historical figures can deliver lectures with nuanced facial expressions, or customer service bots that can engage in a natural, empathetic conversation. The stability and duration of the 45-minute run time are critical selling points, suggesting enterprise-level viability for sustained interaction.

However, the sophistication of the technology is inseparable from its inherent risks. The leap from simple deepfakes to real-time, long-form, multi-state character generation creates a dangerous infrastructure. The model's capacity to generate convincing, emotionally resonant, and highly personalized video content makes it an ideal tool for malicious actors.

Deepfake Risk and Ethical Guardrails

The immediate and most pressing concern surrounding LPM 1.0 is its proximity to a fully realized deepfake weapon. The ability to generate convincing, long-form video of an individual speaking and reacting to prompts—even if the initial subject is a generic AI face—presents unprecedented risks of fraud, manipulation, and targeted impersonation. The technology could be exploited to create fabricated evidence, disseminate disinformation, or execute sophisticated phishing scams that are visually indistinguishable from reality.

The development team, while acknowledging the immense potential, has been cautious, emphasizing that LPM 1.0 remains a purely research project with no public release planned. They have noted that the generated videos still contain visible artifacts when analyzed quantitatively, suggesting a noticeable gap compared to true video quality. This caution is paired with a firm stance: access will only be considered "if and when adequate safeguards and responsible-use frameworks are firmly in place."

This stance highlights a critical industry failure point: the pace of technological capability is vastly outpacing the establishment of ethical and legal guardrails. The deployment of such powerful tools requires a global, coordinated effort involving researchers, regulators, and platform providers to mitigate the inevitable misuse.